Pick your computing paradigm: analog, digital, quantum (or even symbolic). Now, think about the attempt to ascend the tree of abstraction, from logic gates to general intelligence. What’s missing? One answer I’ve been considering —inspired by Reward is not the optimisation target—is this: others.

I’m not an all-in Girardian, but I do think that our ideas and aversions being a consequence of triangular, mimetic dynamics is plausible. Even likely. The following passage from Alex Danco’s “short and dangerous” introduction to mimetic desire illuminates* the concept at a basic level:

“As we grow up and live our lives, we watch others and learn what it is we ought to want. Aside from the basics, like food, water, shelter and sex, our desire for any particular object or experience is not hard-coded into our DNA; we’ve learned to want it by watching other people. But what is hard-coded into our DNA and hard-wired into our brains is the desire to be; and to belong. The true root of all desire, Girard and others argue, is never in the objects or the experience we pursue; it’s really about the other person from whom we’ve learned to want these things.

Girard calls these people the “mediators” or the “models” for our desire: at a deep neurological level, when we watch other people and pattern our desires off theirs, we are not so much acquiring a desire for that object so much as learning to mimic somebody, and striving to become them or become like them. Girard calls this phenomenon mimetic desire. We don’t want; we want to be.”

Think about what happens when a baby is born. They see other people, almost immediately. As they grow from baby to child to teen to adult, they’re immersed in and surrounded by the presence of others, modelling and mediating their own intentions and behaviour as a consequence of the behaviour and perceived intentions of these numerous people. Machines are not raised like this. We raise machines alone, in solitude.

A human’s being is continuously informed by others, at all times and in all places. A human’s being is swimming in a river of influence, sometimes going with the current, sometimes paddling furiously against it, other times traversing it from side to side—but always its agency is constrained by the current. A machine’s being, in contrast, is informed prior to its operation, in a discrete manner, and in a vastly more limited set of space and time.

I’ve previously caricatured the technological stack as composed of three sections:

- The boring edge (e.g. silicone, electronics, hardware, low-level languages)

- The lumpy middle (e.g. web applications, common software)

- The bleeding edge (e.g. program synthesis, nanotech, AI, blockchain)

An example from each section highlight the absence of others in our dealings with machines.

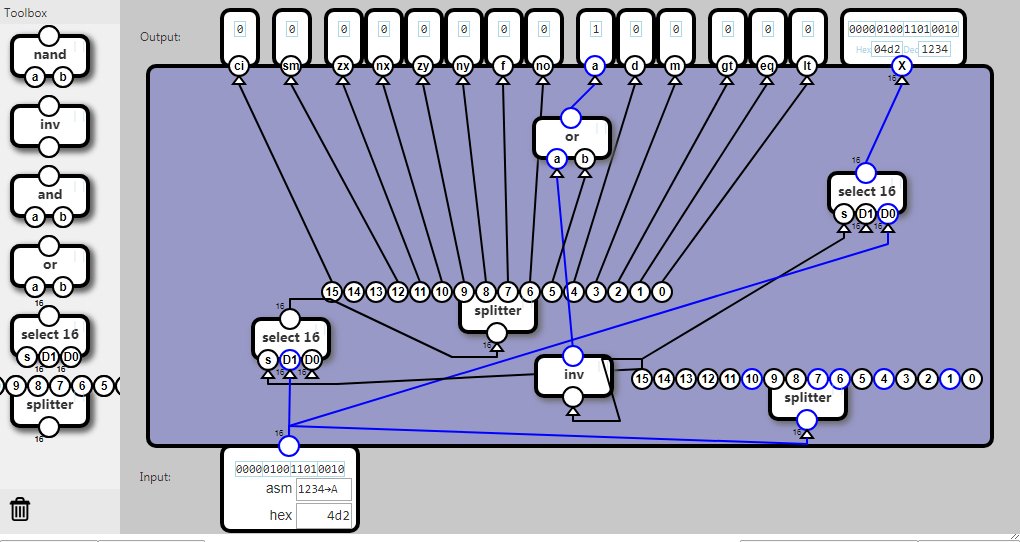

Boring edge: The Nand Game by Olav Junker Kjær

Here’s how the game is described: “You are going to build a computer starting from basic components … The game consists of a series of levels. In each level, you are tasked with building a component that behaves according to a specification. This component can then be used as a building block in the next level.”

Above is a picture of the final completed level (not achieved by me). Nowhere is there an affordance for an “other”, either in the game or in the domain this game is a proxy for. Different components may be interconnected, but upon active connection they become a part of the entity, a piece of the system itself. Contrast this with a human encountering another: upon seeing you I don’t subsume your self-ness into my own. The two are co-affected but remain distinct.

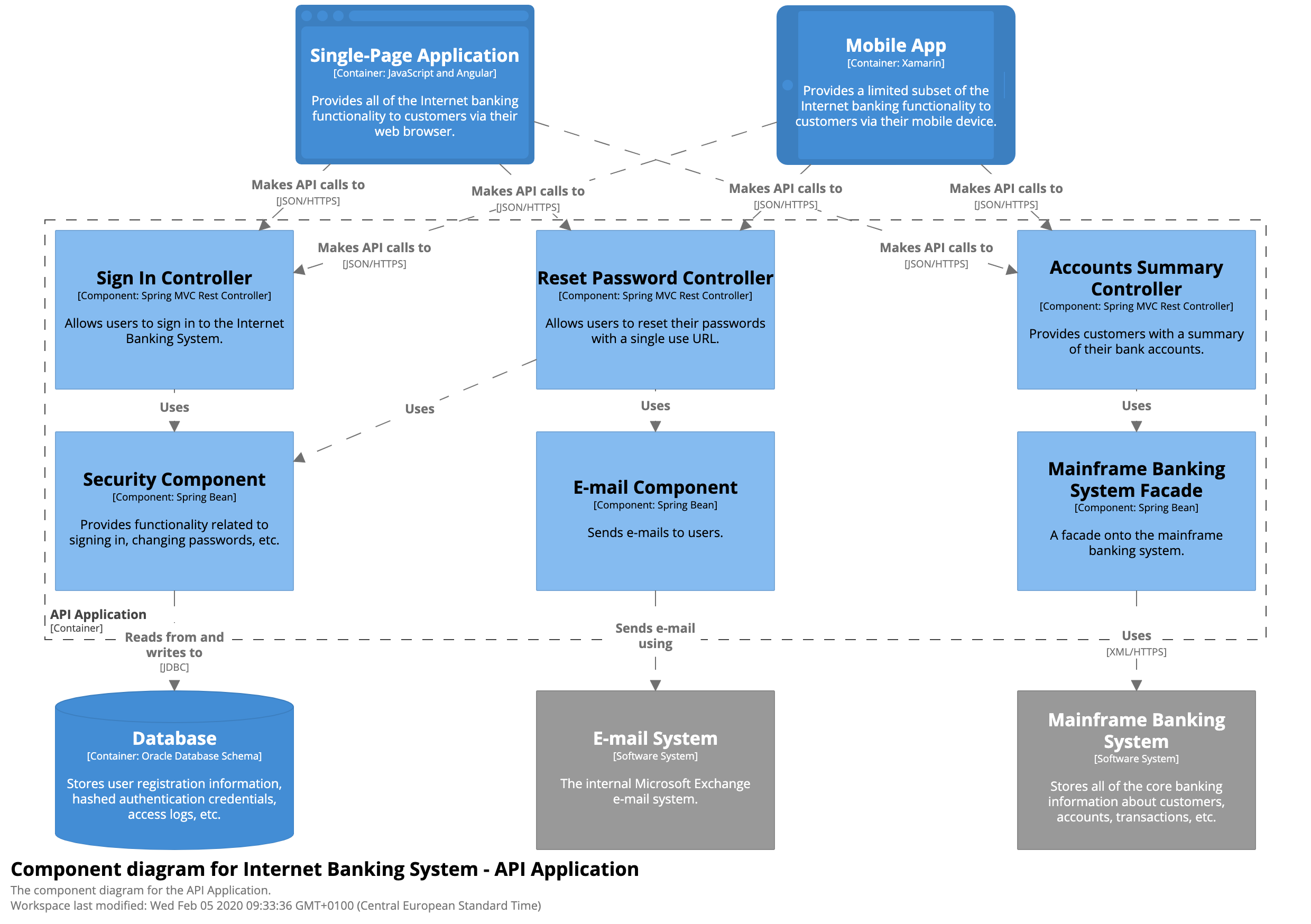

Lumpy middle: a generic web application

Others are absent in the lumpy middle, too. The operation of a software program may involve calls to helper functions, or perhaps appeals to the methods of a class to which an object belongs. The functions or methods are necessary to the operation of the program, and somewhat distinct—they retain a measure of separation from the program itself—but they are not perceived as an other.

Below is an example diagram of components within a single container, rendered using the C4 modelling approach.

Even in a web application where user-triggered events drive application response, the user is stripped of their otherness in order to make a legible contribution to the operation of the application.

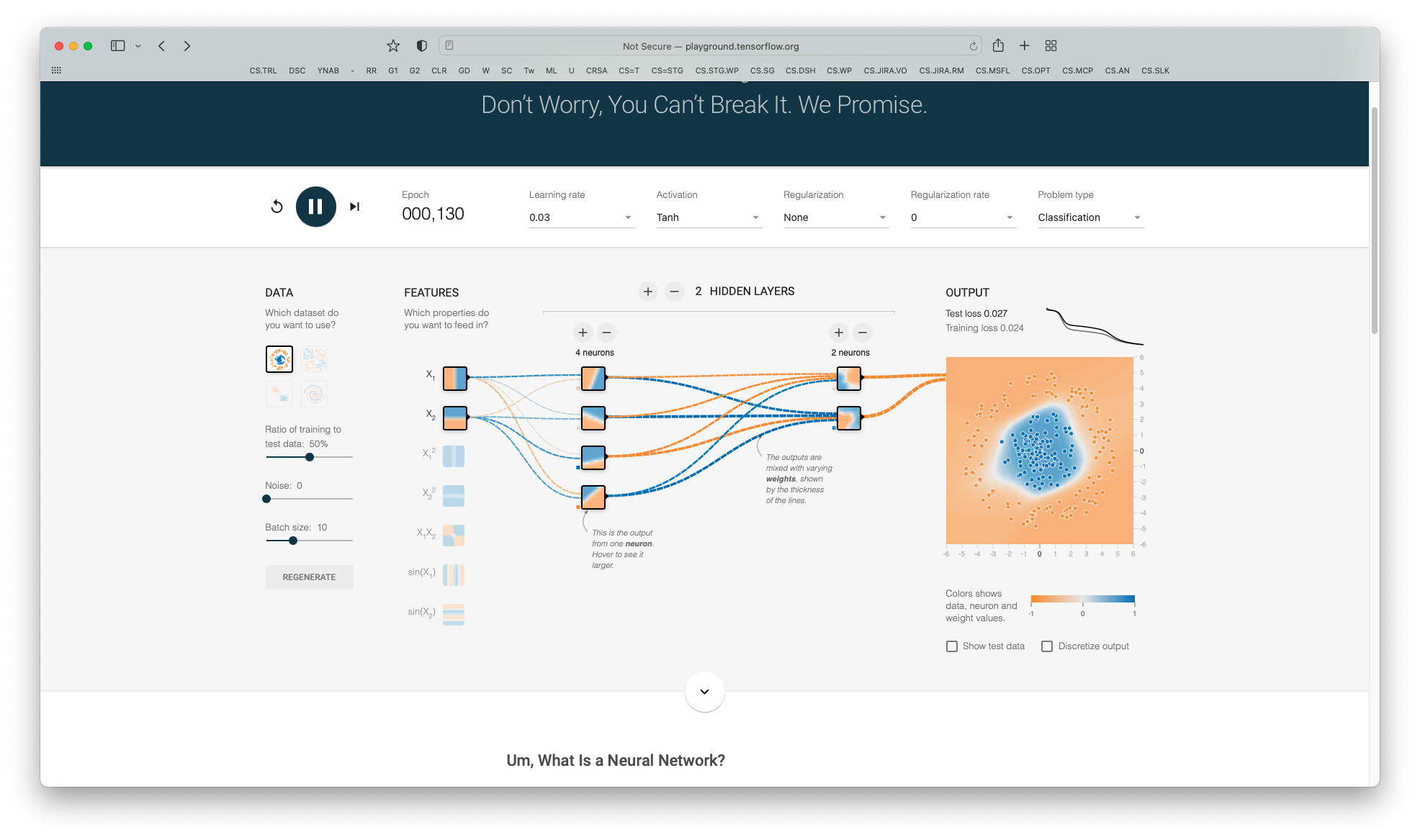

Bleeding edge: an interactive neural network playground by Daniel Smilkov and Shan Carter

Under the question, “Um, what is a neural network?”, is the following answer: “It’s a technique for building a computer program that learns from data. It is based very loosely on how we think the human brain works. First, a collection of software “neurons” are created and connected together, allowing them to send messages to each other. Next, the network is asked to solve a problem, which it attempts to do over and over, each time strengthening the connections that lead to success and diminishing those that lead to failure.”

Even here, in a neural net meant to approximate how a human brain works, the only other is a sub-agent of the same over-arching system.

Are alternative approaches—less lonely machine-rearing methods—worthy of investigation? At the boring edge and lumpy middle? Probably not. Generating, sustaining and adapting conceptions of the other would be:

- too difficult given the (current) constraints on computing resources

- illogical given the lack of payoff from having an other present at such a low level

The bleeding edge is different.

Consider the case of a large language model. This Christopher Manning talk, On Large Language Models for Understanding Human Language, provides some perspective on LLMs. It also highlights the rapid growth in the language data—as well as the overall compute—required to train the highest performing LLMs. Contrast this with a young child’s ability to pick up multiple languages in a short span of time, somewhat passively, and whilst simultaneously developing other systemic capabilities (like walking).

The absence of others seems, to me, like a significant factor in how a machine learns to wield a language versus how we do—and one that Manning points to at the end of the talk. So let me pose some questions concerning it:

- What would happen if the training of a large language model included a two-way, continuous feedback step based on the perceived performance of a similar, simultaneously learning model—one of inferior, equivalent or superior capability?

- Further: what would happen if this continuous feedback step was based on the behaviour of many inferior, equivalent and/or superior simultaneously learning models?

- Even further: what would happen if the feedback generated from those numerous additional steps was given equivalent or greater weight than performance on training tasks?

- Extra far: what would happen if this network of continuous feedback was active from the genesis of the LLM itself?

I don’t know whether such training of machines has been tried. Cursory searches turn up research into parallel or simultaneous model training, but I see no scheme that focuses on integrating and prioritising continuous feedback between numerous, simultaneously-learning-but-distinct models.

Do you know of any such initiatives? Do you know someone who might? Please ask them. I’d like to find out. My suspicion is that, if tried, it would lead to some interesting results. Potentially nonsensical, but sufficiently interesting nonetheless.

*I’d recommend reading Cynthia Haven’s Evolution of Desire for an introduction to Girard—the man and his perspective—and Rene Girard’s Mimetic Theory for a deeper introduction to the theory itself. The latter by Wolfgang Palaver is the one book I’d recommend to anyone not familiar with Girard.